New MacBook Pro with Retina Display Reviewed

March 12, 2013

Google Cars fined over Illegal Data Collection

March 13, 2013Having many servers in different locations obviously increases redundancy and resilience.

Electromagnetic signals travel very quickly. The fastest they can go is the speed of light in a vacuum (c), but in practice communications signals tend to travel at some large proportion of that speed. In copper wires they can travel at between 40% and 80% of c.

Electromagnetic signals travel very quickly. The fastest they can go is the speed of light in a vacuum (c), but in practice communications signals tend to travel at some large proportion of that speed. In copper wires they can travel at between 40% and 80% of c.

Fiber Optic Network

Surprisingly, fiber optic signals travel at comparable rates, around 70% of the speed of light in a vacuum.

That means that in the best possible circumstances, a signal can theoretically circumnavigate the world in about a fifth of a second.

Even when signals are transmitted into space and bounced off a satellite they take quite a bit of time to get through the atmosphere and back down again.

Modern Internet clients send requests to many different servers, each signal accruing some delay because of the inherent physical limitations of communication technology (and the universe).

Unfortunately, no network on earth is a straight line connection between two points. There are many intermediate stages that affect the speed of the signal propagation. Signals always go through routers and network switches, at least one on either end and often as many as fifty or more; a number that increases with distance. Each node in the network adds a small amount of time to the propagation and to the latency that the user experiences.

The efficiency of routers varies according to their load, their age, and the computing power they have available. Each of these small delays adds up until, in many cases, the user experiences multi-second delays, which is terrible for user experience and can have a significant impact on many factors that are important to sysadmins and other network users.

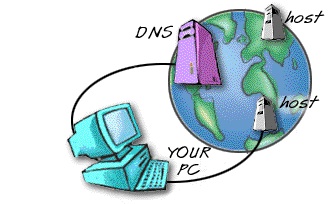

In this respect, DNS is no different to other Internet traffic. The further DNS requests travel and the less reliable the networks they travel through, the higher the latency. This is a particularly crucial effect for DNS services, because high DNS latency means that a site won’t even start to load for a number of seconds. The solution is to take the distant servers, put copies of them everywhere, and connect them with a very high speed network . In effect, this is what a global DNS provider does; By placing copies of the DNS services all around the world and routing requests to the nearest servers, they can ensure that the propagation delays incurred by distance and routing/switching delays are considerably mitigated, resulting in a very fast and responsive DNS service.

Global DNS Hosting

A global DNS hosting service with DNS load balancing has other benefits too. Having many servers in different locations obviously increases redundancy and resilience. The best DNS hosting doesn’t rely on just a couple of servers in one data center, but spreads the risk of failure across many servers in numerous data centers.

With DNS failover strategies in place, this redundant network can almost instantaneously replace inaccessible parts regions with healthy servers. Using a global DNS network with a managed DNS service is an important part of ensuring that network traffic is routed quickly and efficiently to its destination with minimal latency.

About the author: Evan Daniels is the technical writer for DNS Made Easy, a leading provider of DNS hosting. Follow them on twitter @dnsmadeeasy.