Decentralized Web Hosting: A Fad, or Here to Stay?

November 7, 2018

The Most Unique Data Centers Around the World

November 13, 2018Advanced analytics, the blockchain, artificial intelligence (AI), cloud, virtualization – there are so many technologies affecting the landscape of information technology. A quick advancement of these technologies as well as the emergence of the new ones allow super-efficient and quick processing of vast amounts of data storage.

Data centers – a network of computer server facilities that remotely store, process, and share vast amounts of information – are a perfect example where all of the aforementioned technologies make a considerable impact. The rapid rise of AI and machine learning, IoT connectivity, business process automation, and blockchain applications have contributed to the shift from monolithic centralized architectures to decentralized deployments.

With the data needs rising at the speed of light, data centers need to keep up with a quick pace of technological innovation to meet them. For example, global IP traffic is predicted to increase nearly threefold over the next five years.

In this article, let’s take a look at how various technologies like artificial intelligence and cloud have changed data centers and what the future holds for this amazing technology.

Artificial Intelligence (AI) in the Data Center

All industries stand to benefit from artificial intelligence, and data storage and processing is no exception. Lots of well-known companies have chosen AI technologies to advance their data centers, including Google. The search giant made this announcement on its blog in 2014 about using machine learning to create “better data centers” that would use only a half the energy of a typical data center.

The company even released a special white paper explaining how to apply machine learning to optimize data centers. According to the author of the paper who is also an engineer on Google’s data center team, they used machine learning to improve management and optimize some of the operations, including cooling, temperature, and the IT load.

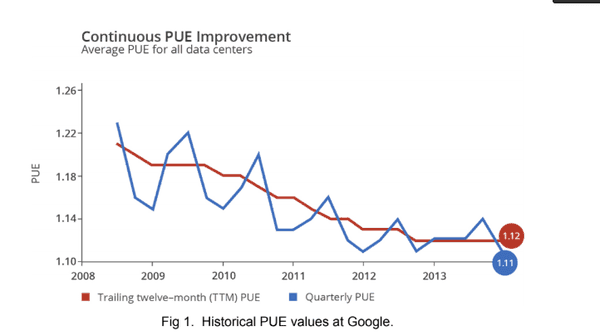

For example, thanks to predictive models powered by machine learning, PUE – a measure of energy efficiency – has been improved significantly in recent years.

Photo Source: google.com

As you can see in the image, the 2008’s value of PUE – 1.21 was improved to 1.12, all thanks to the implementation of machine learning models and other AI-powered technologies.

Naturally, lots of other companies will follow suit, and many possessing sufficient capabilities already did. Among them is Hewlett Packard Enterprise (HPE), which brings the future of self-managing data centers by utilizing machine learning.

In a white paper called “Can Machine Learning Prevent Application Downtime?” HPE explains why this technology is much better than humans at predicting and preventing downtime. In fact, here’s the full list of capabilities of machine learning as described in the white paper:

- Downtime prediction

- Downtime automatic prevention

- Prescribe resolution

- Rapid root-cause analysis

- Cross-stack application of analytics

- Measured availability metrics

- Analytics-driven tech support.

With all these capabilities and more on the way, we can safely assume that AI will play an important role in the future of data center technology.

Data Center Cloud Solutions

This is a technology that has already changed a lot in the world of data centers and is poised to make even more reforms in recent years. In fact, some sources such as Cisco predict that cloud data centers will replace traditional data centers within three years.

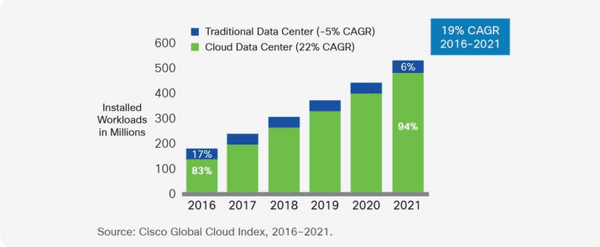

Specifically, Cisco claimed that 94 percent of workload and compute instances will be processed by cloud data centers by 2021, which means that only six percent of the workload will be managed by traditional data centers.

Photo Source: Cisco Global Cloud Index, 2016-2021 report

With the rapid adoption of cloud data centers, it becomes quite clear that we’re in the middle of the cloud computing revolution.

“Cloud data centers offer increased performance, higher capacity, and greater ease of management compared with traditional data centers,” according to Cisco.

For example, one of the most important factors currently prompting the migration from traditional data centers to cloud data centers is the greater degree of virtualization in the cloud space. Virtualization is the process of virtualizing physical centers in a data center facility along with storage, which greatly reduces the costs of facilities, hardware, cooling, and simplifies maintenance and administration.

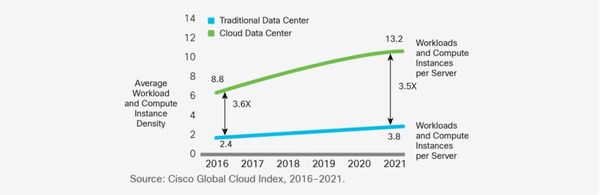

The degree of virtualization is expressed as workload and compute instance density; the value of these factors for cloud-based data centers will grow from 8.8 in 2016 to 13.2 by 2012, according to the aforementioned Cisco report.

Virtualization, cloud data servers vs traditional servers

Evidently, the workload and compute instance density for traditional servers will increase from 2.4 in 2016 to 3.8 by 2021. The superiority of cloud-based servers here is clear, so it’s safe to assume that this factor will continue to contribute to the migration from traditional data centers to cloud centers.

What Is Non-Volatile Memory Express (NVMe)?

Every aspect of data storage, processing, and distribution has to get better to satisfy the ever-growing demands of speed and efficiency. Traditional data moving technologies such as Advanced Technology Attachment (ATA) and the small computer system interface (SCSI) are rapidly becoming incapable to meet these needs, so the new technology, Non-volatile memory express (NVMe), a new storage protocol, has been getting popular in recent years.

An NVMe controller dramatically increases both the number of queues available and the depth of each queue. According to the State of NVMe: Perceptions and Misconceptions report by ActualTech Media, this device has 64K queues available, with each queue capable of supporting up to 64K commands.

This translate into more than 4 billion commands for action in case you have a large system; compare that to the number of commands available with a spinning disk controller – up to 256 – and it becomes apparent why NVMe is getting so widely popular.

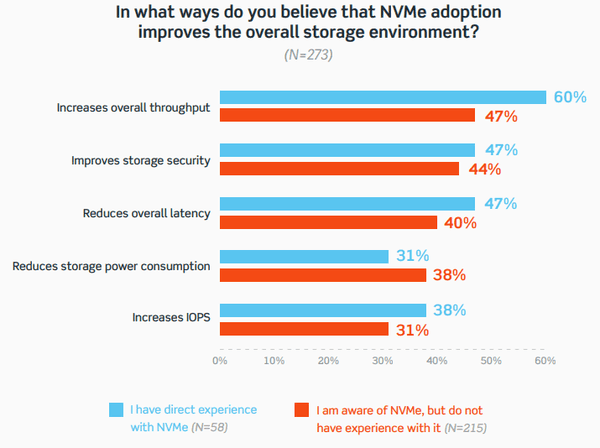

The report by ActualTech Media also outlined some of the most impacting benefits of NVMe as reported by respondents in their survey. Here as the complete results.

Photo Source: Source: State of NVMe: Perceptions and Misconceptions report, ActualTech Media

As you can see, the survey demonstrated a fairly high awareness of the benefits of NVMe technology even among those who didn’t have any experience using it. The claims of the benefits are supported by people who have used the technology, too.

Many industries and applications stand to benefits from an NVMe infrastructure, according to the report.

These findings indicated that people have knowledge of the diverse benefits of NVMe technology, which also supports the fact that the trend of its adoption will continue to accelerate. High-end enterprises aren’t the only users of NVMe because the data technology industry is quickly ramping up its adoption among small businesses and helping them to improve decision making and deliver excellent solutions to clients.

The Bottom Line

Data centers are constantly changing. Right now, we’re seeing how the data industry is adopting a wide range of new technology, with the ones described above being some of the most important. They greatly enhance the functions and capabilities of data centers and accelerate the shift to other, even more, advanced technologies that will be needed in the future.