Friday Fun Blog: Magic Leap Edition

April 22, 2016

This Week in Getting Hacked: Shape Up, Banks Edition

April 28, 2016UPDATE (April 2016): Project Natick is about to get larger. Project leader Ben Cutler told a New York conference in late April 2016 that the program is ready for the big leagues.

This means that the testing they’ve done (detailed below) is not a one-time thing, but something that’s about to be expanded towards commercialization.

The test was for one rack, sealed underwater for three months in depths of roughly 30ft off of the Pacific coast of the United States.

Photo Credit: datacenterdynamics.com (linked above)

Cutler stated that additional projects are slated for testing using larger racks and submerging them to depths of up to 600ft.

The next step in the project will also begin to adopt the technologies for renewable energy from the tides and currents of the ocean.

This is a fascinating project that only gets cooler by the month. If you’re not familiar with Project Natick, please continue reading. Otherwise, stay tuned for more updates.

(From March 03 2016)

A little over 70 percent of this giant rock is covered in water, yet we’re still crowding the parts of it that aren’t with giant data centers. Good thing Microsoft is finally realizing this and starting Project Natick, an experiment to submerge a data center in the ocean.

I know what you’re thinking: Complicated computer parts and water typically don’t mix. That’s so 1990s of you. This is the 21st century and we can put electronics under water now.

However, there are some pitfalls to doing this—exemplified by the fact that no one has been able to create a ocean-powered generator that lasts more than two years—but—if we can use a dam for rivers, then we can use the ocean too (we just have to work out the kinks of controlling a nigh-unstoppable force).

Microsoft’s bold undertaking looks something like this:

It’s a good thing they know what they’re doing—but do they? According to Norm Whitaker, head of special project for Microsoft Research NExT, Project Natick is a small group that looks at “moonshot projects.”

We’re a small group, and we look at moonshot projects – Norm Whitaker, Microsoft Research NExT

For Project Natick to be considered a “moonshot” there has got to be some pratfalls, but let’s first explore the benefits to an underwater data center.

Are There Benefits to an Underwater Data Center?

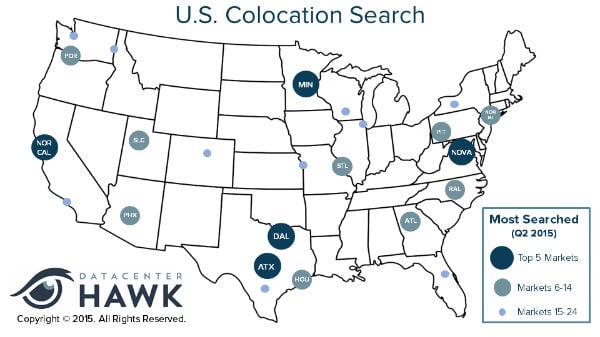

In short—yes. Microsoft states that “50 percent of us live near the coast. Why doesn’t our data?” It would make sense then—to reduce latency issues, etc.—that the ocean would be a viable place for data centers as far as latency is concerned. There is some basis to this if we look at the most searched for data center locations (specifically for colocation) in 2015, according to Data Center Hawk:

Photo Credit: datacenterhawk.com

Notice that three of the top five most searched for data centers are located in the middle of the country, away from the coast.

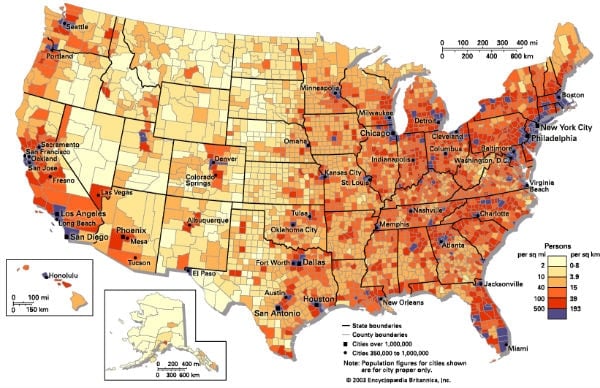

Compare that with the population density:

Photo Credit: britannica.com

While obvious reasons for this exist (such as cost of land leading to cheaper hosting costs), those reasons would be belittled when storing data in the ocean. For these populated areas to have their data stored essentially next to them would reduce the need for many Point Of Presences (POPs) around the country which can lead to lag.

Another benefit would be tidal wave power. This seems obvious as the tides never, ever stop (and if they did we’d be in big trouble). This would basically be like placing a data center within a power plant, or even better, within a generator. Those electrical components probably wouldn’t play too nicely together in that type of environment. (Now let’s add water to the equation…actually, just keep reading. We’ll get there.) But, if utilized properly, this unlimited power source would dramatically (and obviously) reduce the power cost compared to an inland data center. In fact, it’s renewable, reusable, constant, uninterruptible, and everything else that we’re always looking for in a power source.

Project Natick Team Members (Photo Credit: microsoft.com linked above))

Going along with the power aspect, would be the reduce cost of cooling. This is fairly self-explanatory, but constantly cycling water makes for an amazing cooling method (just ask anyone who water-cools their PC).

While there would be other benefits, the main focus of any data center is power, cooling, and latency, which an underwater data center would do very well. But let’s get back to the potential issues.

What Are Some Pitfalls to Submerging a Data Center?

Politics

If you hail from the United States, you’ve probably heard of California’s drought by now. It’s bad—scary bad. Which is odd because California has a gigantic body of water on its left side. Logic would argue to build desalination plants, but do you think the powers-that-be could agree on anything of the sort? Of course not—this isn’t Australia.

Photo Credit: microsoft.com (linked above)

So while the crops shrivel and people pay exorbitant fines for showering, the endless amount of water from the ocean remains to dirty and salty to use.

Believe it or not, that wasn’t a soap-box. That’s just what happens when humanity tries to do something cool and innovative involving the ocean. I don’t know if it’s too scary for some or what, but for some reason troves of monies are spent researching projects and ultimately scrapped. (Data Center Knowledge has a pretty cool list of such projects here.)

Maintenance

I’m not too familiar with Aquaman, but from what I remember he does not have any type of IT experience. That’s unfortunate because he could maintain these centers without a giant tank of oxygen strapped to his back.

Alas, evolution has failed to grace us with gills so therefore we have to dredge what we’ve already submerged. Sadly, most tech companies do not have their own ocean-dredging departments so they have to rely on third-party cranes to pull their data centers from the depths. One little slip up….

Photo Credit: microsoft.com (linked above)

But the worst part of it all is having to do all that in the first place. Something goes wrong with the hardware you just send some person into the center and they fix whatever needs to be fixed. You don’t have to hire a whole excavation team. While it makes for good drama, I’m sure it would get annoying.

The solution here would be to build underwater facilities where people could take submarine shuttles to-and-from work and just hang out there all day. That would be cool, but the cost of that would be far too much. At that point, you might as well just host in Hoboken.

The cool thing about Project Natick, however, is that there are very few moving parts (externally). This goes against most ocean powered devices which typically have hinges, buoys, turbines, etc. This will most certainly help maintenance costs.

Power

Tidal power is a tricky thing. But wait—didn’t you list this as an advantage? Yes, however, Microsoft doesn’t plan on powering their submerged data center via the ocean. There just isn’t a reliable way to do that yet. So for right now, power falls under both an advantage and disadvantage to a submerged data center. (To see what ways scientists are using water for power, check out this list by Green Tech Media.)

Will an Underwater Data Center Actually Happen Anytime Soon?

In short, no. On a small scale, Microsoft projects at least 15 more years. On a large scale it’ll take perhaps double that. The modular data center is just beginning to take hold. Taking that idea from land to sea will take some time. It’s just human nature.

The idea of a submerged data center is fascinating nonetheless. Keeping an eye on Microsoft’s Project Natick is certainly worth your time.