Autonomous Vehicles and the Cost of Data

February 7, 2020

Hyperconvergence & Smart Cities

February 12, 2020On this Page

- What Does a Data Center Look Like?

- Where Is the Processor Located in a Data Center?

- The Importance of High Clock-Rate

- Top Players on the Data Center Processors Market

Processors in data centers have come a long way since the inception of the first server rooms. In this article, we’re bringing you some of the following interesting facts:

- a general overview of data centers and how processors fit into the picture

- the current state of the processor industry – market size, main players

- trends for the future

What Does a Data Center Look Like?

The size, structure, and format of data centers are always different, but what they all have in common are clusters of interconnected servers. Each server is a high-performance computing system with large storage space, memory, processing power and input, and output possibilities.

Credit: NeoCompany

In simple terms, a server appears as a grand version of a personal computer, with a significantly more powerful Central Processing Unit (CPU – processor) and more memory space, but without a monitor, keyboard and other periphery devices that we are used to seeing on our PCS. Monitors can exist in a centralized spot that allows control and supervision over a group of clusters and connected equipment. Server containers are often structured into rows so that the airflow and temperature are controlled more efficiently.

Where Is the Processor Located in a Data Center?

In every data center, you can find the central processing unit (CPU) in the so-called machine room. It’s very important that the air-conditioning of the room is very high-quality (as this is the room with the highest requirements for air-conditioning) and that disaster response mechanisms are in place.

Virtual Processors in Cloud Data Centers

When it comes to physical servers when a single operational system appoints instructions that are further sent to the central processing unit, the total use of the processor is usually under 10%, except in extraordinary cases. This means that processors work less than 10% of the total time at their disposal.

In a virtual environment, virtual processors are allocated to each server. vCPUs (Virtual Central Processing Units) present a number of threads, i.e. instructions that are simultaneously waiting in a queue after you’ve sent them to processing.

When a user purchases a virtual server, they decide what size vCPU they want to buy. After the server goes through the virtualization process, several virtual servers use the same resources, so the usage of processing resources is significantly higher. By how much? This primarily depends on the utilization needs of applications on virtual servers and how much more vCPUs you’ve created compared to the number of logical processors on physical servers where the virtualization platform is located.

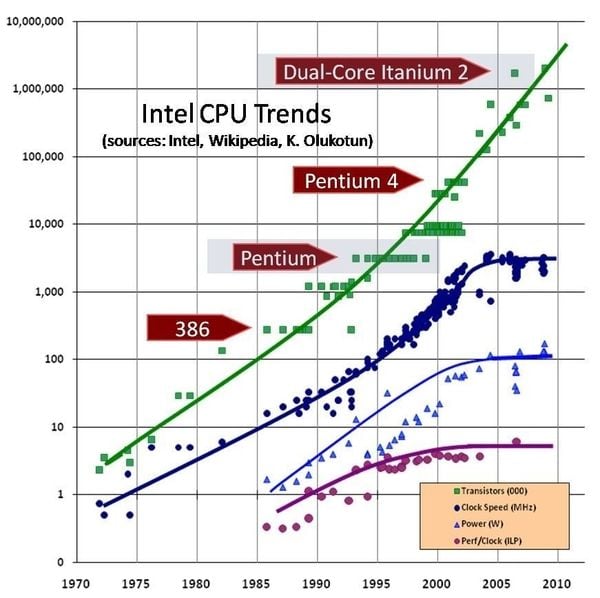

The Importance of High Clock-Rate

Credit: ExtremeTech

The processor carries out instructions at the highest speed allowed by the core of the physical processor. Therefore, the working frequency (in other words, the clock rate) of the physical processor of the computer is important. In other words, if a section of a cloud is comprised of old servers with slow processors, it will be noticeable in the operational force of virtual servers that are using those processors. Also, if the traffic on other sections inside a virtual environment is high, taking up 100% of the processor capacity and leaving no available threads, the system response can become much slower.

In a nutshell, during the building and development phase of a data center, the prerequisite for using advanced technology is to implement fast processors, while the ratio of virtual to logical processors should remain low. Currently, the Guinness World for the highest processor clock speed is held by AMD’s Piledriver-based FX-8370 at 8.723 GHz.

Buying Processing Units as Part of Server Packages

Regardless of the fact that multiple virtual servers are using the same physical resources, the system automatically takes care that the user can totally utilize their reserved resources at any time.

When you start using a cloud server service, you will have to purchase at least one processor. After you decide to add more, you will have to purchase in increments of 1 vCPU. Cloud server providers will also set a limit to the maximum amount of additional processing power you can add to your package.

These limits are determined according to the features which the users can activate independently via the management portal, but they were crafted according to stats of previous user needs. If you need more processing power, you can contact your server provider to make an exception and up your limit.

Top Players on the Data Center Processors Market

Ever since the beginning of computing, there have been two titans that have been competing for glory on the CPU market: Intel and AMD. There are also many other players, like NVIDIA, Hewlett-Packard, Qualcomm, Acer, MediaTek, etc., but the battle always came down to Intel and AMD. Also, there have been some major game-changing events in 2019.

During an event where AMD launched 7 nm processing chips, the company announced that Google and Twitter decided to use EPYC Rome in its data centers. The presentation of the EPYC Rome processor presents a major and dramatic plot twist in AMD’s battle against Intel, which recently published that its 7 nm Ice Lake processor will not be available before 2021.

Intel is still the largest manufacturer of data center processors, but Google and Twitter have been the company’s most important clients so far. On the other hand, AMD’s latest products, as well as the implementation of the low pricing strategy, quickly transformed the company into a serious competitor.

In a press release, Bart Sano, Google’s VP of Engineering, said that the second generation of AMD EPYC processors will help the company to stay innovative. According to Sano, the scalability options of AMD processors, as well as their performance, will expand Google’s ability to innovate its infrastructure and ensure flexibility for its users.

Conclusion

However unbelievable and Scifi-like it may seem, the data center processor industry still has room to grow. As soon as 2020 started, we have been swept away by huge news from AMD and can keep our eyes open for the next news from Intel.

After all, the data center processor market is one of the most dynamic, exciting and innovative markets out there!