Virtualization in 2020

February 4, 2020

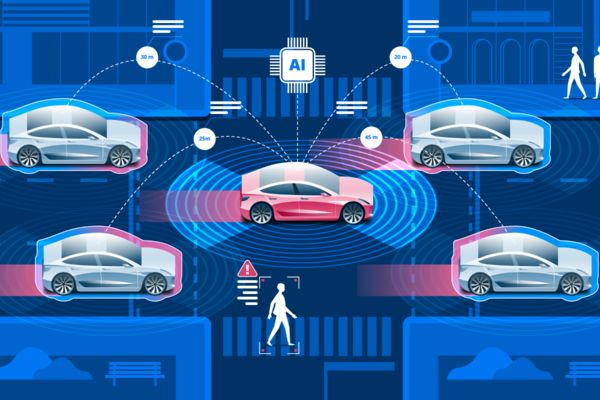

Autonomous Vehicles and the Cost of Data

February 7, 2020Artificial intelligence has been around for quite some time, and its constant development has disrupted different industries and sectors with its performance-boosting and cost-cutting quality.

On the other hand, we’re witnessing the rise of data science, capable of utilizing ridiculously huge amounts of data, processing, analyzing, and making sense of it. Not so long ago, it was impossible to interpret unstructured data, and now with Big Data technology, organizations see immense benefits from implementing gargantuan data collection and analysis.

AI brings an array of new possibilities that can facilitate things, so let’s discuss reasons to leverage it in the data center.

It Allows for Conserving Energy

Data centers consume a large amount of energy in order to function, a large portion of this energy is used for cooling systems.

If we bear in mind that they power the entire internet, it’s clear why they emit as much CO2 as the airline industry.

For example, a typical Google search uses the same about of energy required to illuminate a 60W bulb for 17 seconds and as a result produces 0.2gr CO2. If this doesn’t sound like too much, imagine how many searches are there in just a day.

Needless to say, energy consumption is expected to double as data traffic grows.

Credit: IBM Systems Magazine

Google has tackled this issue by introducing AI in order to optimize the use of energy in its data centers properly and efficiently. With the help of this smart technology, Google has managed to reduce the energy consumption of its data center’s cooling system by 40%.

AI is capable of learning and analyzing temperature, testing flow rates, and evaluating cooling equipment. Different intelligent sensors can be deployed to spot sources of energy inefficiencies and optimize them autonomously.

Finally, the fact that cooling systems will be optimized will prevent wear and tear of the equipment.

It Will Reduce Downtime

Data centers occasionally suffer from power outages, which in turn lead to downtime. The cost of these incidents both financial and in terms of user experience can be very high – 25% of global enterprise servers lose between $300,000 and $400,000 during an hourly outage.

In order to prevent such scenarios, organizations hire a number of professionals to monitor and predict outages.

However, this is a complex task that requires the staff to analyze and interpret different issues to be able to identify the root of the problem and predict an outage. On the other hand, AI can keep track of a number of parameters including server performance, network congestions, or disk utilization and predict outages.

Besides this, AI-powered predictive engines can identify faulty areas that could cause the system to crash. What’s also important to mention is the autonomous nature of this technology, as AI can be used not only to predict outages but also the users that could be affected by them, and come up with a strategy for recovering from the outage.

It Will Optimize Workload Distribution

Predictive analytics will enable workload distribution.

Back in the day, it used to be IT experts’ responsibility to optimize the performance of the servers in their company, thus making sure that workloads were properly distributed.

Maximizing optimization ensures the reduction of costs and better allocation of resources, and both these factors are of critical importance to the organization’s digital operation. But, IT teams are usually limited by being understaffed or not having enough resources to keep an eye on this complex process 24/7.

AI uses powerful algorithms capable of performing a large number of calculations in no time and optimize storage and determine load balancing in real-time.

It Will Enable Unmanned Automation

Automation is one of the most important segments of AI, and recent developments have made it possible for organizations to experiment with the so-called “lights out” data centers. In a nutshell, these data centers don’t have to be monitored and supervised by people.

Credit: dotmagazine

Unmanned automation will render traditional data centers, capable of efficient computing and reduced data consumption, overseen by human technicians, obsolete. The goal is to achieve an even higher level of efficiency and autonomy through lower oxygen levels to reduce the risk of fire, more efficient cooling designs, improved storage capacity by increasing rack heights and making them accessible by robots, etc.

AI-powered data centers in the future will be monitored remotely with the help of DCMI software, and thanks to unmanned automation, human error will be reduced to a minimum.

It Will Improve Security

It’s no secret that data centers are susceptible to different cyber threats, and hackers are always on the prowl, looking for some new ways to snatch sensitive data.

The trouble is that when they manage to worm their way into organizations’ networks, they can obtain access to personal and confidential information of millions of users.

For example, when Marriott International was hacked, personal details, such as names, email addresses, passport numbers, and phone numbers of 500 million people were collected.

The key to preventing cyber threats lies in anticipation and early detection.

That’s why every organization hires data security experts to prevent these incidents. However, analyzing cyber attacks is a demanding and time-consuming task, which is why AI and its tremendous analytic capability can do wonders when it comes to this task. Namely, AI will learn normal network behavior which means that it will be able to notice any deviation from it. Such deviations are usually a result of different security threats.

AI will also make it possible for the data center to detect malware and security gaps.

It’s clear that the future of data centers heavily relies on leveraging AI technology. These five reasons are the most important ones, but it’s just the tip of the iceberg as artificial intelligence and its sub-set technologies such as machine learning and neural networks will be a must for gaining a competitive edge and keeping pace with the latest trends.

Main Photo Credit: AiThority.com