This Week in Getting Hacked: iHacked Edition

September 23, 2015

Friday Fun Blog: Not A Planet Edition

September 25, 2015With fall now in full swing, temperatures will be getting colder, food will be getting pumpkin-spicier, and winter will be rearing its befouled mug. While some may appreciate the relief of the crisp, autumnal air, others will be pining for spring—and there are some things which get no relief from the heat.

Yes, I’m talking about the servers within data centers which work around the clock and get no relief from the changing of the seasons.

Data center cooling is a *cough* hot topic and we’ve written in length about it in the past—but this time we’re going to shift our paradigm from what we normally write about in regards to data center cooling.

Thanks to our innovative friends at Green Revolution Cooling (GRC), we now know that cooling the air in a data center is not as efficient as submerging the servers in liquid—oil to be exact.

What Is the CarnotJet Oil Submersion Cooling System?

Before we get into the specifics of the CarnotJet system, many may be wondering how submerging electrical equipment in a liquid, even oil, could be safe for the infrastructure of a data center.

Well, oils that are made for this kind of work are what’s called a dielectric coolant. Dielectric, for the uninitiated, is (from Wikipedia) “an electrical insulator that can be polarized by an applied electric field. This particular type of cooling has been used for decades in electrical transformers, capacitors, etc.

The technology is safe and efficient—the only hurdle being adoption. Concerns have been raised about the safety of dielectric coolants (think: leaks) and the overall cost has never really outweighed its benefits over other methods of cooling.

GRC, however, has taken these concerns and developed the CarnotJet system. Carnotjet uses a non-proprietary mineral oil blend, dubbed ElectroSafe, which allows servers (including blades) to be entirely submerged.

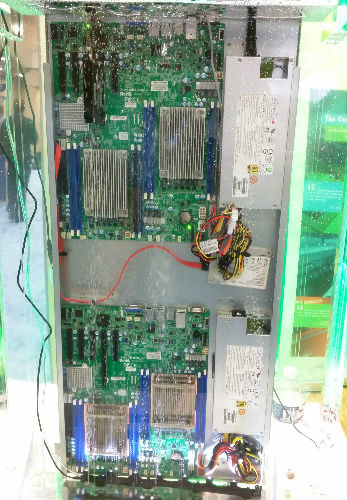

From HostingCon 2015: GRC ElectroSafe oil submerged server

GRC claims that:

ElectroSafe is “clean, odorless, dielectric, white mineral oil with 1,200x the heat retention capacity of air by volume. It is a specific, high-performance blend that offers exceptional cooling for data center servers at a low cost. It is electrically non conductive and compatible with all rack mounted OEM servers.”

The Benefits of ElectroSafe

Now that we’re a little more clear on what ElectroSafe is, let’s take a look at why it’s an amazing technological innovation.

NFPA Approved

The most important benefit is that ElectroSafe is rated as a 0-1-0 substance by the Fire Prevention Association (NFPA).

The first zero stands for “health” with zero meaning it poses no health hazards.

The one is for “flammability”, which is graded on a 0-4 scale. One means that that substance is not very flammable.

The second zero stands for “reactivity’, which is how the substance reacts to fire exposure conditions and substances used to extinguish fires. The zero means that ElectroSafe is completely table and can be extinguished under most, if not all, circumstances even with water.

Efficiency

Since we know that this particular mineral oil has 1,200x the heat retention capacity of air, then we can assume that the oil would not have to be as cool as air to maintain the same cooling-efficiency. And that assumption would be correct. GRC claims that “maintaining a coolant temperature of 38℃ is much less energy-intensive than cooling air to 25℃.” Meaning it would take 1,200 times the amount of heat energy to raise the temperature of ElectroSafe than air.

Additionally, there is no need for server fans under the CarnotJet system since, well, there’s no airflow needed to cool the components. Server fans draw a pretty significant amount of power which is saved using oil submersion.

Longevity

ElectroSafe will last feasibly forever. It won’t evaporate if exposed to air.

How the CarnotJet System Works

The system is comprised of four major components:

- The horizontal rack (42U) tank.

- The pump, which includes the heat exchanger and strainer.

- The control system

- The cooling tower, which extracts heat from the entire system.

Check out the system in action in a video we captured during the 2015 HostingCon in San Diego:

The initial shock of seeing a server in what initially looks like water is a little disturbing. We’ve all grown up in a culture of don’t put a toaster in the bathtub mentality. But seeing how it worked first hand was awe inspiring. In fact, we were given a USB stick submerged in ElectroSafe to see the magic in action—and you know what? It worked!

You can read more behind the physics of the CarnotJet system by downloading GRC’s “Oil Submersion Cooling” white paper.

But below you can find a nice gallery of some of the more interesting and compelling data pulled directly from the white paper:

[huge_it_gallery id=”17″]

How Oil Submersion Compares to Other Data Center Cooling Methods

As we’ve detailed, oil submersion favors greatly in comparison to traditional data center cooling methods. But there are some others out there either trying to perfect the cooling of air inside a data center or even trying a different method of cooling altogether like GRC.

One of the most striking claims put forth by GRC in the linked white paper is that the CarnotJet system boasts a 1.03 Power Usage Effectiveness (PUE). That’s much lower than air cooling centers and that number will be very difficult to beat. If you don’t believe me, check out some of Google’s PUE numbers on their data center efficiency page.

Moreover, this study done by Data Center Knowledge ponders whether or not PUE has reached its limit—but doesn’t offer many numbers close to oil submersion. This goes back to the aforementioned problem of oil submersion cooling systems: adoption.

Getting larger data centers to see the benefits of an oil submersion system can go a long way to decreasing the amount of power data centers suck up every year.

Other companies are trying to solve the efficiency problems of cooling as well.

Other Data Center Cooling Innovations

Blackiron, a Toronto based company has developed a new cooling method that will cut down on water waste significantly. By using outside air, the DC3 facility is able to meet or exceed LEED and Uptime Institute certifications. Precision cooling technology avoids the use of water-based condensers and instead pipes in cool air from outside. Blackiron says power density is kept to just 15K per rack and that each system has its own evaporator coil, digital compressor and electronically controlled fans for greater efficiency.

IBM developed a robot that can measure and adjust the cooling of a data center floor.The robot’s technology can show the types of tiles based on the data center’s layout, and displays a 3D heat map, indicating which areas need attention and adjustment. This automates a task previously done by people pushing mobile carts around data centers to measure the same thing.

Photo Credit: enterprisetech.com

The folks at RF Code have partnered with CenturyLink, to tackle this problem. RF Code installed wire-free RF Code sensor tags which were only the size of a matchbox at each of the server rack inlets. These were linked to a Power over Ethernet (PoE) system with the RF Code readers installed in the data center’s ceiling. This standalone, secure system was then fully implemented into the company’s building management system (BMS). With this system in place, CenturyLink was able to program their Computer Room Air Handler (CRAH) to account for hotspots and any other temperature disparages throughout the center.

While the above innovations aim at solving data center air-cooling, they don’t offer any real change like oil submersion. However, despite its benefits, oil submersions initial cost can be a bit jarring and there aren’t many experts out there that can service the server if the need arises.

Until some of these initial infrastructure costs and the stigma of liquid-submersion cooling changes, adoption of cooling innovations like CarnotJet will be difficult.

Eventually, however, there will come a time when traditional cooling methods are no longer cost effective and companies like GRC will be waiting in the fold.